Artificial Intelligence

How Opus 4.6 Development Skills Enforce Best Practices While Preserving Expert Oversight

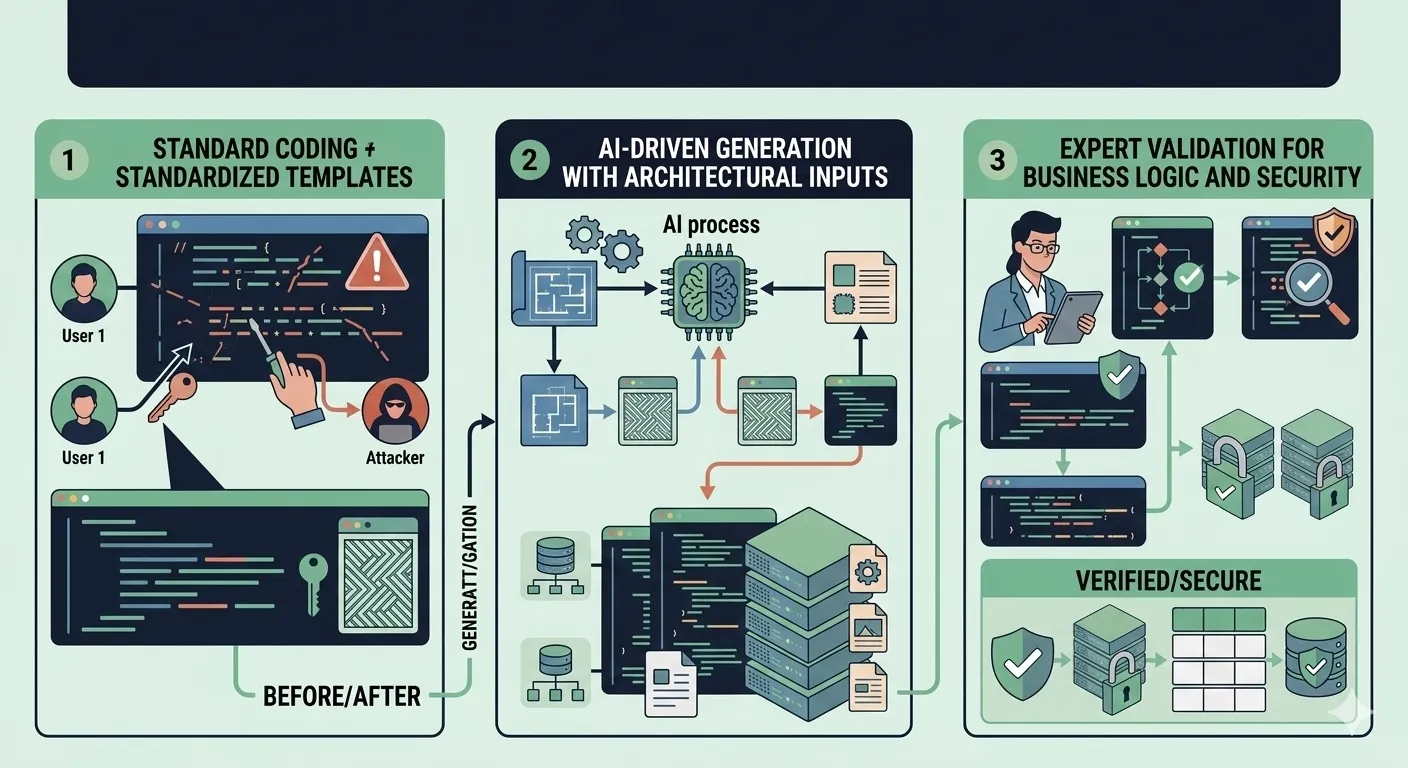

There is a widening gap between what AI can generate and what production systems actually need. Large language models produce syntactically correct code at remarkable speed, but syntactic correctness is not the same as architectural soundness. A function that compiles is not necessarily a function that belongs in your codebase. This disconnect becomes dangerous at scale, when dozens of AI-generated files accumulate without consistent structure, naming conventions, or dependency rules. Development skills solve this problem. A skill is a reusable instruction template that constrains how an AI model generates code, enforcing specific architectural patterns, file organization rules, and coding standards before a single line is written. With Opus 4.6, these skills reach a new level of effectiveness because the model's instruction-following capacity handles complex, multi-layered constraints without silently dropping rules. But skills are not a replacement for human expertise. They handle the structural and mechanical aspects of code generation while freeing expert developers to focus where they add irreplaceable value: business logic correctness, security boundary validation, and domain-specific edge cases.

What Are Development Skills in AI-Assisted Coding?

A development skill is a structured document that tells an AI model exactly how to generate code for a specific context. Unlike a casual prompt that says "build me a REST API," a skill defines the architectural layers, file naming conventions, dependency direction rules, error handling patterns, and validation strategies the generated code must follow. Think of it as a coding standards document that the AI reads and enforces in real time, every time it generates output.

The difference between raw prompting and skill-guided generation is repeatability. When you prompt an AI model with "create a Node.js API with user authentication," you get a different structure every time. One run might put everything in a single app.js file. The next might create a folder structure that vaguely resembles MVC. A third might mix Express middleware with business logic in the same function. Skills eliminate this variance by encoding decisions that should never change between runs: where files go, how layers communicate, which patterns are mandatory.

Anatomy of a Well-Written Skill

An effective skill contains several distinct sections that work together to constrain AI output. The constraints section defines non-negotiable rules: one class per file, no circular dependencies, interfaces defined before implementations. The patterns section specifies which design patterns to apply: CQRS for command/query separation, repository pattern for data access, middleware pipeline for cross-cutting concerns. The validation rules dictate where input validation occurs (Application layer, never Domain) and which libraries to use. The output format section specifies file naming conventions, directory structure, and export patterns.

Opus 4.6 handles these multi-layered skills with particular fidelity. Earlier models would follow the first few constraints and gradually drift as the output grew longer. Opus 4.6's extended context window and improved instruction adherence mean it can maintain compliance with 30+ rules across thousands of lines of generated code without silently dropping constraints midway through generation.

Why Raw AI Prompting Fails at Scale

The inconsistency problem is the single biggest obstacle to adopting AI code generation in professional teams. When one developer prompts the AI with slightly different wording, the output structure changes. When the same developer prompts identically on different days, model temperature and context differences produce variations. Over weeks, a codebase assembled from raw AI prompts becomes a patchwork of conflicting patterns, inconsistent naming, and tangled dependencies.

A common mistake developers make is trusting unstructured AI output without guardrails. They see a working endpoint and assume the underlying architecture is sound. But "working" and "maintainable" are different standards. An endpoint that returns correct JSON today but violates dependency inversion will become a refactoring nightmare when the data source changes. An authentication middleware that mixes authorization logic with session management will create security blind spots that no amount of testing catches easily.

Consider a real-world scenario: a team of eight developers adopts AI-assisted coding without establishing shared skills. Developer A generates services that return raw database entities. Developer B generates services that map to DTOs. Developer C generates services that throw HTTP exceptions from the domain layer. Within two sprints, the codebase has three incompatible patterns for the same operation. Code reviews become a bottleneck because every pull request requires architectural discussion that should have been settled before generation began.

The Cost of Inconsistency in Production

Inconsistent architecture is not just an aesthetic problem. It creates measurable performance and scalability costs. When different modules handle errors differently, monitoring systems cannot aggregate failure patterns reliably. When some services use connection pooling and others create new connections per request, database performance degrades unpredictably under load. When validation occurs at different layers across different modules, security vulnerabilities cluster at the boundaries where assumptions change. The review burden alone can slow delivery velocity by 30-50%, as senior developers spend more time correcting structural issues than evaluating business logic.

Building a Node.js Clean Architecture Skill: Practical Example

Let us walk through a concrete skill that instructs Opus 4.6 to scaffold a Node.js REST API enforcing clean architecture. This skill encodes six categories of constraints that together guarantee structural consistency across every generated module.

The skill begins with file structure constraints. Every generated project must follow a strict directory layout with four top-level layers: domain/, application/, infrastructure/, and api/. Each layer has its own subdirectories for entities, use cases, repositories, and controllers respectively. The rule is absolute: one class per file, one file per concern, no exceptions for DTOs, commands, or interfaces.

Next, dependency direction rules enforce that imports only flow inward. The api/ layer can import from application/, but application/ never imports from api/. The domain/ layer imports nothing from any other layer. This is validated structurally: if the AI generates an import statement that violates this rule, the skill instructs it to refactor immediately using dependency injection.

Interface-first design requires every repository, external service, and data source to be defined as an interface in the domain/ or application/ layer before any implementation exists. Implementations live exclusively in infrastructure/. This guarantees that business logic never couples to specific databases, HTTP clients, or third-party services.

The skill enforces CQRS pattern with handler separation: every write operation is a Command with a dedicated CommandHandler, every read operation is a Query with a dedicated QueryHandler. Commands return Result objects, never raw entities. Handlers are the only place where use case orchestration occurs.

Validation rules specify that all input validation uses Zod schemas defined at the Application layer. Domain entities enforce their own invariants through constructor validation, but external input parsing is never the Domain's responsibility. Error responses follow a standardized envelope format.

Finally, error handling patterns define a custom error hierarchy: DomainError, ApplicationError, InfrastructureError. Each layer catches and wraps errors from inner layers, adding context without exposing implementation details. The API layer has a single error-handling middleware that maps error types to HTTP status codes.

Skill Output vs. Raw Prompting Output

The structural difference is immediately visible. When generating a user registration feature, the skill-guided output produces this directory structure:

src/

domain/

entities/

User.js

interfaces/

IUserRepository.js

errors/

DomainError.js

application/

commands/

CreateUser/

CreateUserCommand.js

CreateUserHandler.js

validation/

createUserSchema.js

errors/

ApplicationError.js

infrastructure/

repositories/

UserRepository.js

errors/

InfrastructureError.js

api/

controllers/

UserController.js

middleware/

errorHandler.js

routes/

userRoutes.jsThe same prompt without a skill typically produces a flat structure with two to four files: a route file, a controller that contains both validation and database queries, and perhaps a model file that mixes schema definition with business logic. The skill-guided output is immediately reviewable because reviewers know exactly where to look for each concern.

The Expert Review Checkpoint

A well-designed skill does not pretend to replace human judgment. Instead, it explicitly marks the boundaries where human review is essential. The skill instructs the AI to leave // REVIEW: business rule markers wherever domain-specific logic requires validation by someone who understands the business context. Similarly, // REVIEW: security boundary markers appear at authentication checks, authorization gates, and data sanitization points.

This hybrid model outperforms both full-manual and full-AI approaches. Full-manual development is slower but catches everything. Full-AI development is fast but misses domain nuance. The skill-guided approach lets the AI handle the 60-70% of code that is structural boilerplate while concentrating expert attention on the 30-40% that requires business knowledge, security awareness, and edge case reasoning.

Comparison: AI Development With vs. Without Skills

The following table summarizes the measurable differences between raw AI prompting and skill-guided AI development across the dimensions that matter most to engineering teams:

| Aspect | Raw Prompting | Skill-Guided Generation |

|---|---|---|

| Architecture consistency | Low — varies per prompt and session | High — enforced by skill constraints |

| Code review burden | High — reviewers check everything | Moderate — reviewers focus on logic |

| Pattern adherence | Inconsistent across developers | Uniform across all team members |

| Expert review scope | Every line, including boilerplate | Business logic and edge cases only |

| Onboarding new developers | Slow — must learn implicit conventions | Fast — skill documents the architecture |

| Dependency rule violations | Frequent and hard to catch | Prevented at generation time |

| Refactoring cost | High — inconsistent foundations | Low — uniform structure throughout |

The review burden reduction alone justifies the investment in writing skills. When reviewers trust that the file structure, naming conventions, and dependency directions are correct by construction, they can dedicate their full attention to the questions that actually require human judgment: Does this business rule handle all edge cases? Is this authorization check sufficient? Does this query perform acceptably under projected load?

When Skills Are Not the Right Answer

Skills add overhead that is not always justified. For rapid prototyping, when you need to validate an idea in hours rather than days, raw prompting is faster. The generated code is throwaway, so architectural consistency does not matter. Similarly, one-off scripts for data migration, log analysis, or environment setup do not benefit from skill constraints because they run once and are discarded. Research spikes, where you are exploring an unfamiliar API or library to understand its behavior, benefit more from conversational iteration than from structural constraints. The rule is straightforward: use skills when the generated code will live in a shared codebase and face ongoing maintenance.

The Expert Review Layer: Why It Cannot Be Removed

Skills guarantee structure, convention, and mechanical correctness. They enforce that files go in the right directories, that imports flow in the right direction, that handlers follow the prescribed pattern. What they cannot guarantee is whether the code does the right thing in a business context.

Consider an architecture scenario in a fintech API that processes currency conversions. The skill correctly scaffolds a ConvertCurrencyHandler with proper CQRS structure, Zod validation, repository interfaces, and error handling. The generated code rounds conversion results to two decimal places using standard JavaScript floating-point arithmetic. This is structurally perfect and logically wrong. Currency rounding rules vary by currency: Japanese yen uses zero decimal places, Kuwaiti dinar uses three, and certain interbank calculations require five. The skill cannot encode this knowledge because it is domain-specific business logic that changes per client, per regulatory jurisdiction, and per product line.

Security edge cases present the same challenge. A skill can enforce that authentication middleware exists on every protected route. It cannot determine whether the authorization logic correctly handles role inheritance, temporary access grants, or the specific business rules around data access that differ between organizations. These decisions require someone who understands the threat model, the compliance requirements, and the operational context of the deployed system.

Designing Review Checkpoints Into Your Skills

The most effective skills explicitly embed review markers that guide human reviewers to the decisions that require their expertise. Instead of leaving reviewers to scan every file, the skill instructs the AI to insert specific markers:

// REVIEW: business rule— appears wherever domain-specific logic determines output behavior, such as pricing calculations, eligibility checks, or workflow transitions.// REVIEW: security boundary— appears at authentication gates, authorization decisions, data sanitization points, and anywhere sensitive data crosses a trust boundary.// REVIEW: external integration— appears at API client configurations, retry policies, timeout values, and rate limit handling where operational assumptions must be validated.// REVIEW: data model assumption— appears wherever the generated code assumes a specific data shape, relationship cardinality, or nullable field that the domain expert must confirm.

Teams that adopt this pattern report reducing review cycles by 40-60% on structural issues. Reviewers no longer spend time verifying file placement, naming conventions, or import directions. They navigate directly to the REVIEW markers and evaluate only the decisions that genuinely require human expertise.

Opus 4.6 Capabilities That Make Skills Effective

Not every AI model can execute complex skills reliably. The effectiveness of a skill depends on the model's ability to maintain constraint compliance across long outputs, follow nested conditional instructions, and produce structured output that matches precise specifications. Opus 4.6 brings three capabilities that directly impact skill execution quality.

First, extended context processing means the model can hold the entire skill definition, the existing codebase context, and the generation target in memory simultaneously. When a skill references a file generated earlier in the same session, Opus 4.6 maintains consistency without drift.

Second, instruction adherence under complexity is measurably stronger. Skills with 20+ rules test a model's ability to track and apply multiple constraints simultaneously. In comparative evaluations, Opus 4.6 maintains compliance with complex rule sets at rates significantly higher than previous-generation models, particularly for rules that interact with each other (e.g., "validation at Application layer" combined with "no domain imports in infrastructure").

Third, structured output reliability ensures that generated code follows specified formatting, naming, and organizational patterns consistently. When a skill specifies PascalCase for classes, camelCase for functions, and kebab-case for file names, Opus 4.6 applies these conventions uniformly rather than defaulting to the most common pattern in its training data.

Comparing AI Models for Skill Execution

The practical differences between models become apparent when executing the same clean architecture skill repeatedly. Opus 4.6 maintains full constraint compliance across repeated runs, producing structurally identical directory hierarchies with consistent naming, imports, and patterns. GPT-4o follows the primary structural constraints well but occasionally merges related files (combining a Command and its Handler into one file) or drifts on naming conventions in longer outputs. Gemini models handle the high-level directory structure but struggle with nuanced rules like dependency direction enforcement, sometimes generating infrastructure-layer imports in application-layer handlers. The key differentiator is not whether a model can follow simple instructions, but whether it sustains compliance with complex, interacting constraints across outputs exceeding 500 lines.

Frequently Asked Questions

Can skills replace coding standards documentation?

Skills complement coding standards but do not replace them. A skill is an executable encoding of standards that the AI follows during generation. However, standards documentation serves additional purposes: onboarding human developers, explaining the reasoning behind decisions, and covering scenarios that AI generation does not touch (deployment procedures, incident response, monitoring setup). The ideal setup is a living standards document that serves as the source of truth, with skills derived from it as the enforcement mechanism for AI-generated code.

How do you version-control and share skills across a team?

Skills are text files that belong in version control alongside your codebase. Store them in a .claude/skills/ directory at the repository root. Each skill gets its own subdirectory with a SKILL.md file containing the instructions. Because skills are plain text, they benefit from the same review process as code: changes go through pull requests, team members discuss modifications, and the git history tracks how architectural decisions evolve over time. Teams with multiple repositories can maintain a shared skills repository that individual projects reference.

What happens when the AI deviates from skill instructions?

Deviation is rare with Opus 4.6 but not impossible, especially with extremely long or contradictory skill definitions. When it occurs, the deviation is typically visible in the output structure: a missing directory, a merged file, or an incorrect import direction. The fix is iterative refinement of the skill. Identify which instruction was dropped, rephrase it with stronger emphasis or move it earlier in the skill document (models give higher priority to instructions encountered earlier), and regenerate. Over time, skills stabilize as edge cases are addressed.

Are skills transferable between AI models?

Skills are model-agnostic text documents, so they can be used with any instruction-following model. However, execution fidelity varies significantly. A skill written for Opus 4.6 that uses 30+ interacting constraints may work flawlessly with Opus but produce partial compliance with other models. When transferring skills, test with the target model and simplify constraints if needed. The core architectural decisions in the skill remain valid regardless of which model executes them; only the level of constraint complexity may need adjustment.

Conclusion

Development skills represent the missing link between AI code generation speed and engineering rigor. They encode the architectural decisions, coding standards, and structural patterns that define a well-maintained codebase into reusable templates that Opus 4.6 follows with high fidelity. The result is generated code that arrives pre-structured, consistently named, and architecturally sound, reducing the review burden on expert developers by eliminating structural concerns from the review scope entirely. But the expert review layer remains essential. Skills handle the mechanical dimensions of software quality. Business logic correctness, security boundary validation, domain-specific edge cases, and performance tuning under real-world load patterns require human judgment that no instruction template can replace. The most effective teams use skills to handle the 60-70% of code that is structural scaffolding, then concentrate their expert review on the 30-40% that determines whether the software actually solves the right problem correctly. Start with one skill for your most repeated pattern. A single clean architecture skill applied to your primary framework will demonstrate the value within your first sprint, and from there, the practice scales naturally as your team identifies the patterns worth encoding.

Subscribe

Get the latest posts delivered right to your inbox.

Leave a comment